Large language models—what we’ve come to call Artificial Intelligence (AI)—have made a very aggressive entrance into our lives. In less than five years, a billion people worldwide are using AI applications in an impressive variety of ways. The sudden, widespread, and—by all measures—still-growing use of AI has brought to the forefront many, sometimes pressing, questions about nearly every aspect of human life. Will it take away jobs? Can it improve diagnosis and prevention in healthcare? Will it make businesses more productive? How will it change education? What exactly are we talking about when we communicate with a language model? How should we handle the information, advice, or opinions we exchange? And, ultimately, how can such a massive technological wave be effectively regulated?

These questions naturally concern the people of Greece as well. Month after month, Greek men and women are interacting more and more with these apps. In this anniversary year, marking ten years of public operation, diaNEOsis aims to bring the dialogue on Artificial Intelligence to the forefront with a rich program of research initiatives, events, and discussions. The publication of a new opinion poll mapping the beliefs, perceptions, and attitudes of Greeks toward AI is just the beginning of this new series of content. In this context, it also serves as an initial exploration that will highlight the most important questions on people’s minds—some of which will later be explored in greater depth and analyzed in specific areas.

Διαβάστε ολόκληρη την έκθεση αποτελεσμάτων (.PDF)

The diaNEOsis survey, presented below, was conducted by Metron Analysis through telephone and online interviews with a representative nationwide sample of 1,111 people between January 8 and 13, 2026. It presents the mixed—though not yet very strong—feelings of people in the country regarding the use of AI, the areas citizens believe will be positively or negatively affected, the sometimes significant differences between users and non-users, as well as the near-universal acceptance that certain regulatory initiatives may enjoy. At the same time, two analyses of the survey results are being published, offering an initial assessment. The first is authored by the Metron Analysis team—Stratos Fanaras, Yannis Balabanidis, and Penny Apostolopoulou—and the second by sociologist, postdoctoral researcher at the Hellenic Open University (HOU), and lecturer at the National and Kapodistrian University of Athens (NKUA), Heracles Vogiatzis. Below are some key findings from the study.

The gap between users and non-users

Διαβάστε μια συνοπτικη παρουσιαση των αποτελεσμάτων (.PDF)

It seems that in Greece, as in many other parts of the world, people are adopting AI at a remarkable rate. At first glance, almost everyone—95%—says they are familiar with AI, and of those, only 15% say they have merely heard of it. In contrast, 8 out of 10 confidently state that they know what AI is. A total of 65%, a clear majority of the Greek population, says that they not only know about it but have also used AI. Just three months ago, in October 2025, in another survey by Metron Analysis, this percentage was 18 points lower, at 47%.

Self-reported users have a very distinct profile and differ significantly from non-users. They are about 10 percentage points more likely to be men than women, are consistently younger (although, of course, only among those over 65 are non-users more numerous than users), and they are also much more likely to have a college education and a higher income. Finally, they are less likely to support political extremes.

Significant differences between users and non-users are evident in many questions. Often, the divide between the two groups is so pronounced that the average is insufficient to provide a comprehensive picture. For example, the two emotions most likely to be reported regarding AI are, in order, “Interest” (55%) and “Caution/Skepticism” (46%). However, the two groups are worlds apart: Among users, “Interest” dominates (68%, +42 points compared to non-users), while among non-users, “Caution” prevails (65%, +29 points compared to users).

Productivity, but at a psychological cost

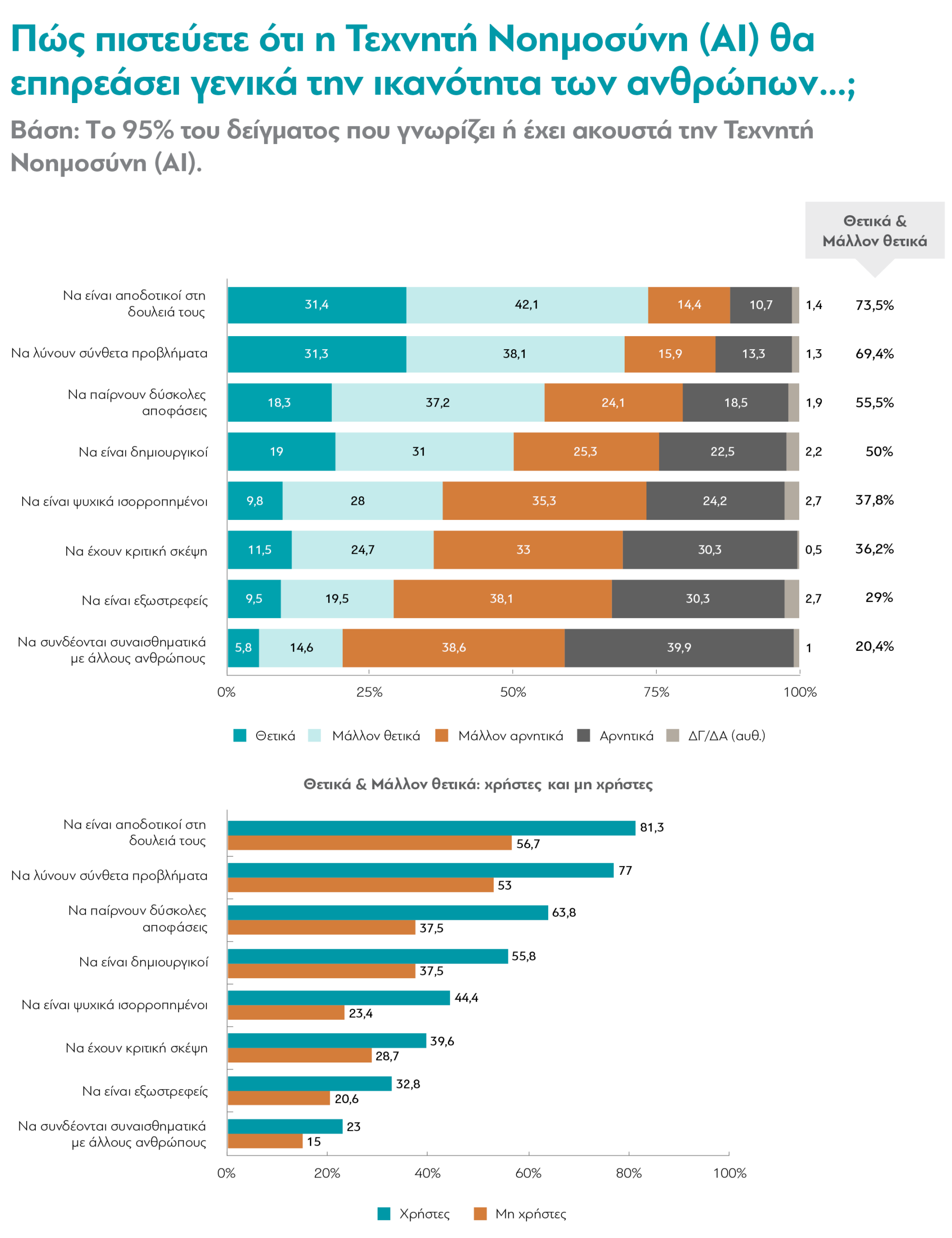

Another area where such a difference in perceptions is evident is in expectations regarding AI. Overall, the responses that garnered more than 50% in positive expectations are linked to productivity and related skills. More than half believe that AI will help us be productive at work, solve complex problems, make difficult decisions, and be creative. Conversely, more respondents believe that functions related to the emotional realm (being mentally balanced, being extroverted, or connecting emotionally with others) will be negatively affected. Clearly, more people also believe that critical thinking skills will be harmed.

However, here too, users are much more optimistic than non-users. Although the order of the most frequent responses is roughly the same, the majority of non-users (though much more cautiously) believe that AI will only improve work efficiency and the ability to solve complex problems. The (mostly) positive responses to none of the other options exceed 50%.

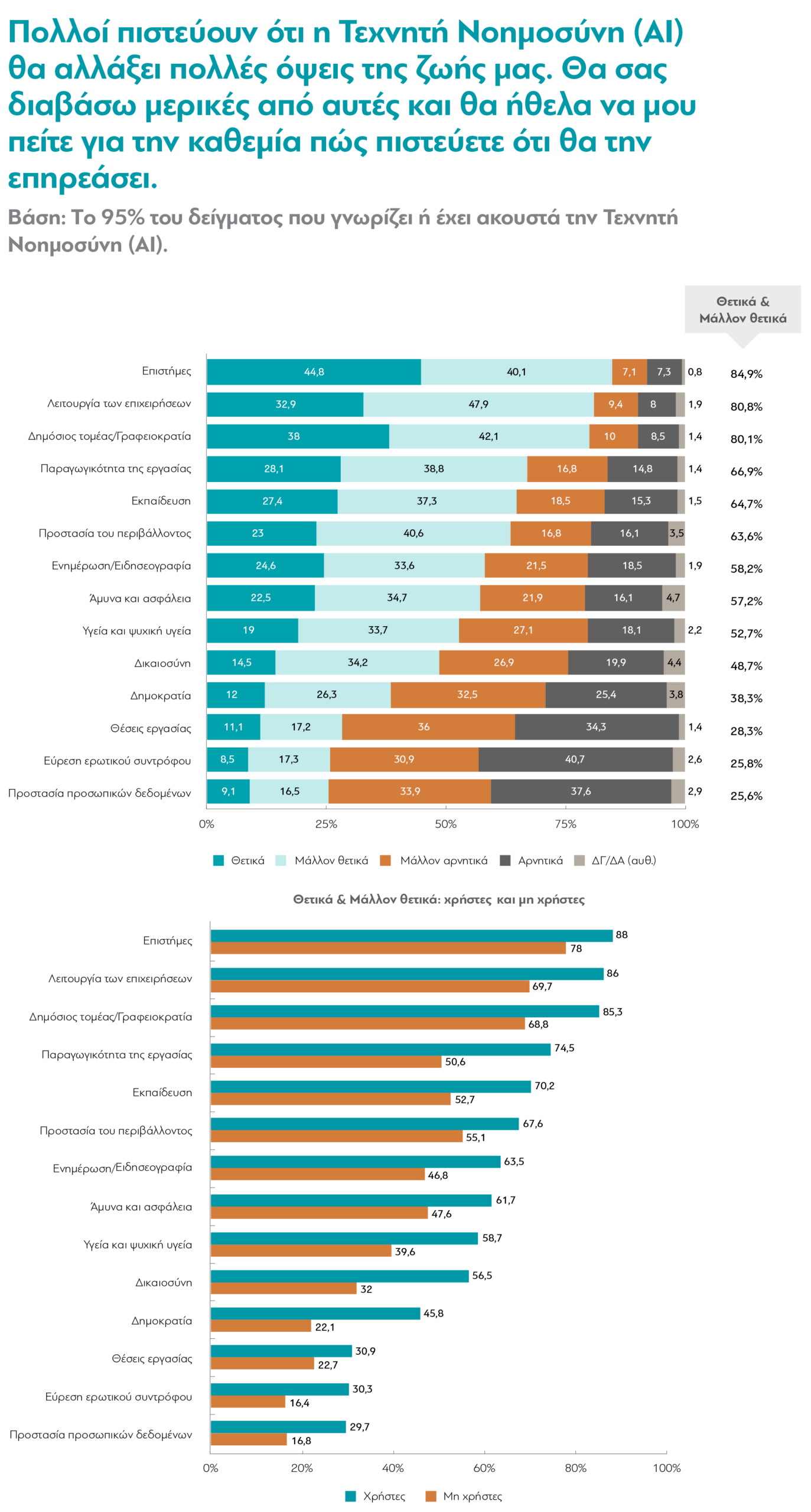

The same tension between benefits for science and the economy and risks to social and democratic life is also evident in the questions about how specific aspects of life will be affected. Areas related to science and technology lead the way in positive responses. Respondents believe that AI will benefit “Science” (85% positive and somewhat positive), “Business Operations” (81%), “Labor Productivity” (67%), “Education” (65%), “Environmental Protection” (64%), “Defense and Security” (57%), and, to a much lesser extent, “Health and Mental Health” (53%). In contrast, there appears to be significant concern regarding areas related to institutions and rights: “Justice” (47% negative and somewhat negative), “Democracy” (58%), and “Data protection” (72%). In fact, this picture emerges even though a very large majority—8 out of 10—believes that AI will improve the public sector by reducing bureaucracy. It is also interesting that in “Information and Journalism,” a field at the center of discussions about the ethical risks of AI, a clear majority (58%) believes it will be positively affected. Finally, in line with the high percentage of people who are pessimistic about how AI will affect emotional connections with other people, the same is true regarding expected changes in finding a romantic partner: 72% believe that AI will make it more difficult.

But how can all these thoughts, experiences, and concerns regarding the use of AI, as outlined above, be reflected in our everyday behavior? How ready are we—beyond simply using AI and thinking about how it will shape the future—to actually incorporate it into our lives as they are today and “trust” it with something, even if it’s just a small thing? Another question in the survey concerns customer service across various sectors. The survey cites banks, healthcare, and public utilities as examples and asks how citizens prefer to be served for matters that are neither important nor urgent: by a human or by AI? A very clear majority (87%) prefers a human. However, here too, users differ from non-users: Far more users (17%—about 1 in 6) prefer to interact with AI.

Information and Regulation

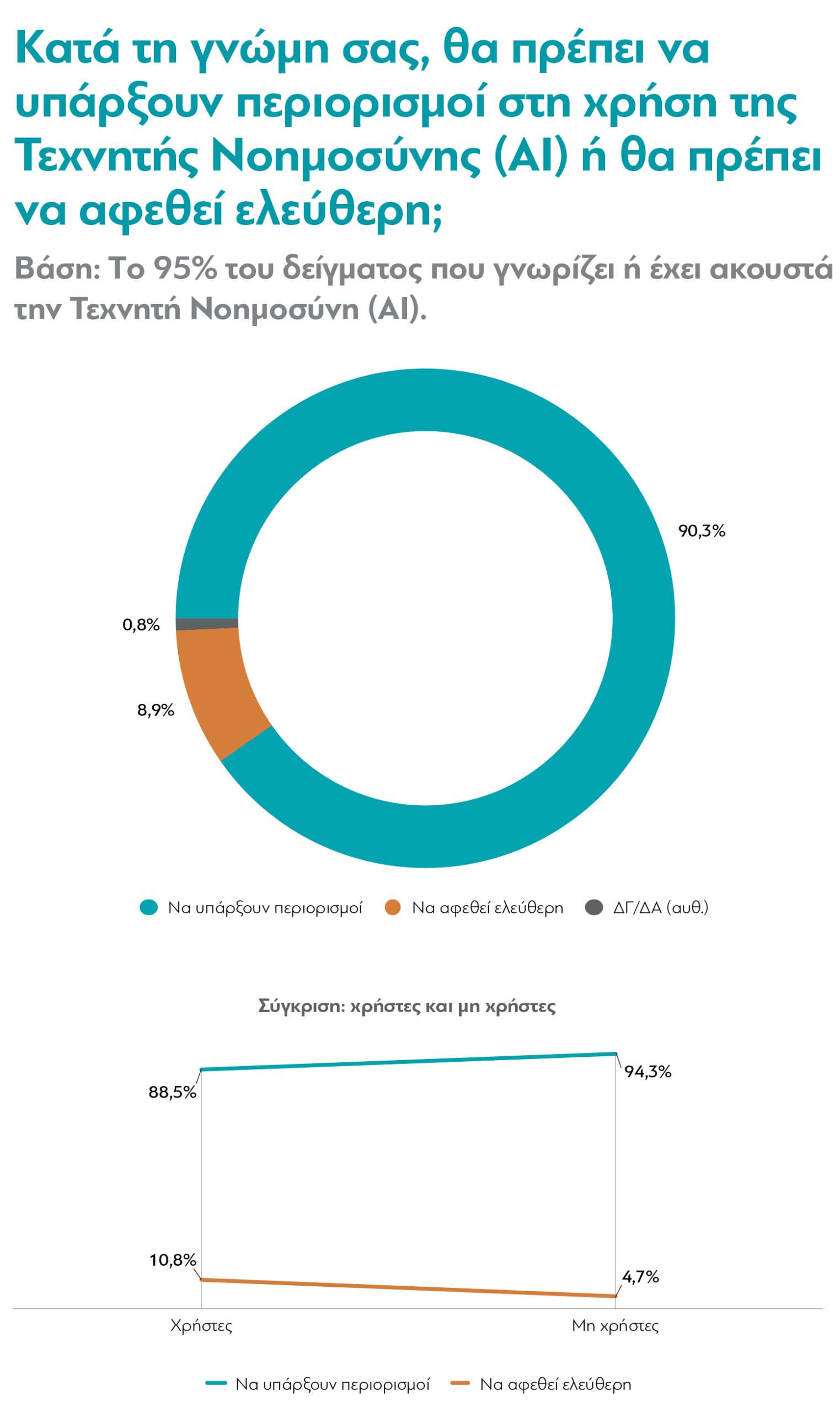

Furthermore, it appears that Greeks are almost universally calling for regulations on the use of artificial intelligence. When asked whether there should be restrictions on the use of AI, 9 out of 10 respondents clearly favor restrictions. They likely recognize that societies today are unprepared to absorb the disruption of this new reality without effective institutions. However, there are still—not huge but notable—differences between users and non-users. Specifically, while relatively few, more than twice as many users as non-users (11% versus 5%) believe that AI should be “left to run free.”

Finally, before any regulations are put in place, who is best positioned to inform citizens—whether they are current users, non-users, or future users—about AI, its risks, and its opportunities? In response to this question, universities and research centers stand out, with 74% expressing absolute or considerable trust. Coming in second, but trailing by 19 percentage points, are family members and friends. Next, in a “range” of 38% to 49% trust, comes a series of institutions: technology and AI companies, professional organizations, independent authorities, the EU, and international organizations. Fourth in the ranking of entities that collectively garner the most trust (42% of respondents expressing absolute or sufficient trust), respondents place diaNEOsis. With significantly lower percentages, around 30%, employers, the government, and the media follow—institutions that generally garner low levels of trust, and not just in relation to AI.

User habits and perceptions

The survey also focuses extensively on AI users, devoting several questions specifically to them. What are the habits of this large and rapidly growing segment of the Greek population when they use these applications? As for platforms, ChatGPT appears to be by far the dominant one, with 75% stating that they use it very or fairly often—a percentage roughly double that of the next most frequently mentioned platform, Google AI Overview.

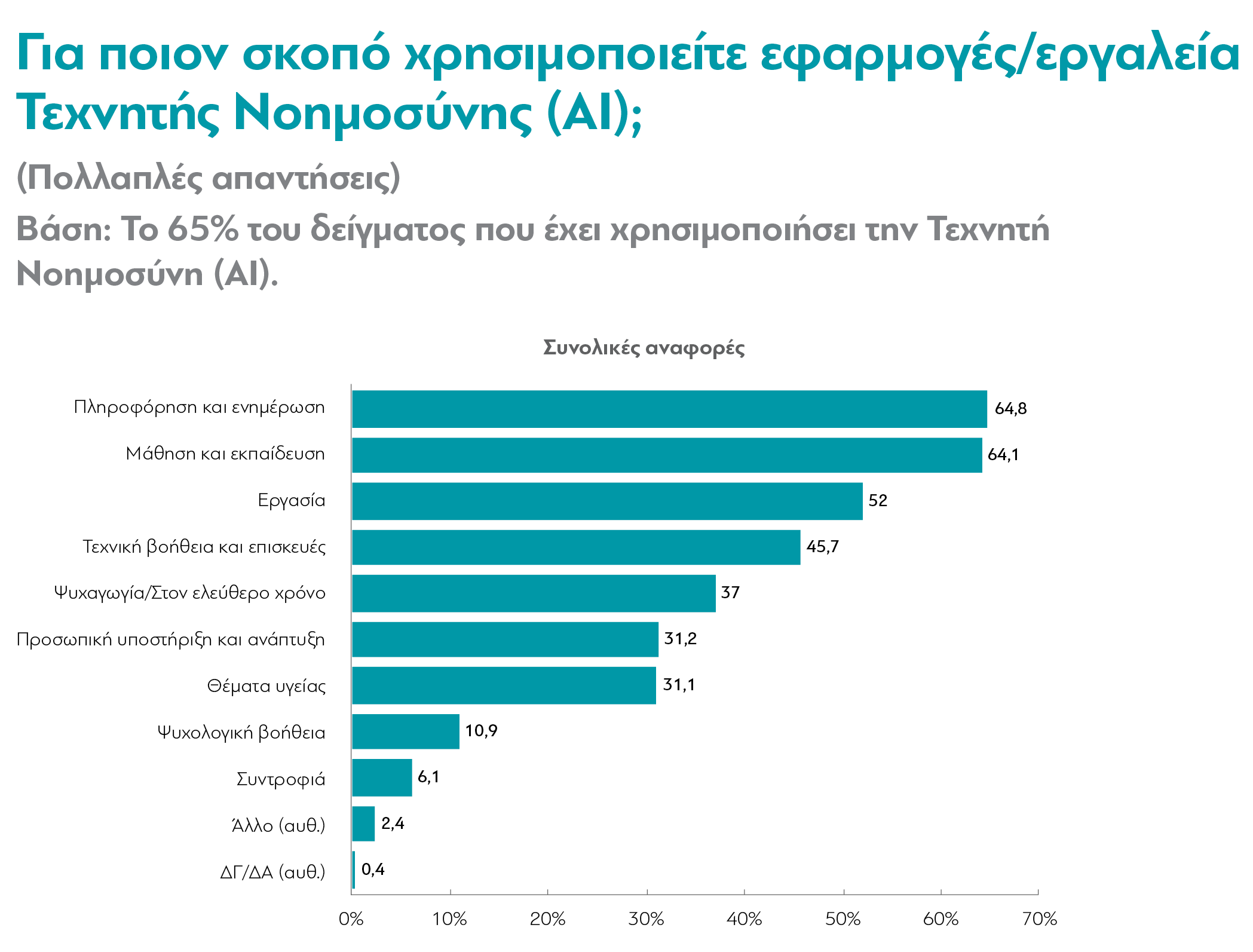

Beyond these specific applications, however, for what purposes do Greeks use AI? In response to this question, “Information/News” and “Education” lead the way with rates close to 65%, followed by “Work” at 52%. It is also evident from other parts of the survey that citizens view AI as particularly useful for similar functions. “Technical assistance and repairs” (46%) and “Health issues” (31%) are also frequently mentioned. Although significantly lower, the percentages of those who say they use AI more broadly as a conversation partner rather than for a specific purpose or task are not negligible, especially when viewed in combination: 37% say they use it for “Entertainment or leisure activities,” 31% for “Personal development,” and fewer for “Psychological support” (11%) or “Companionship” (6%).

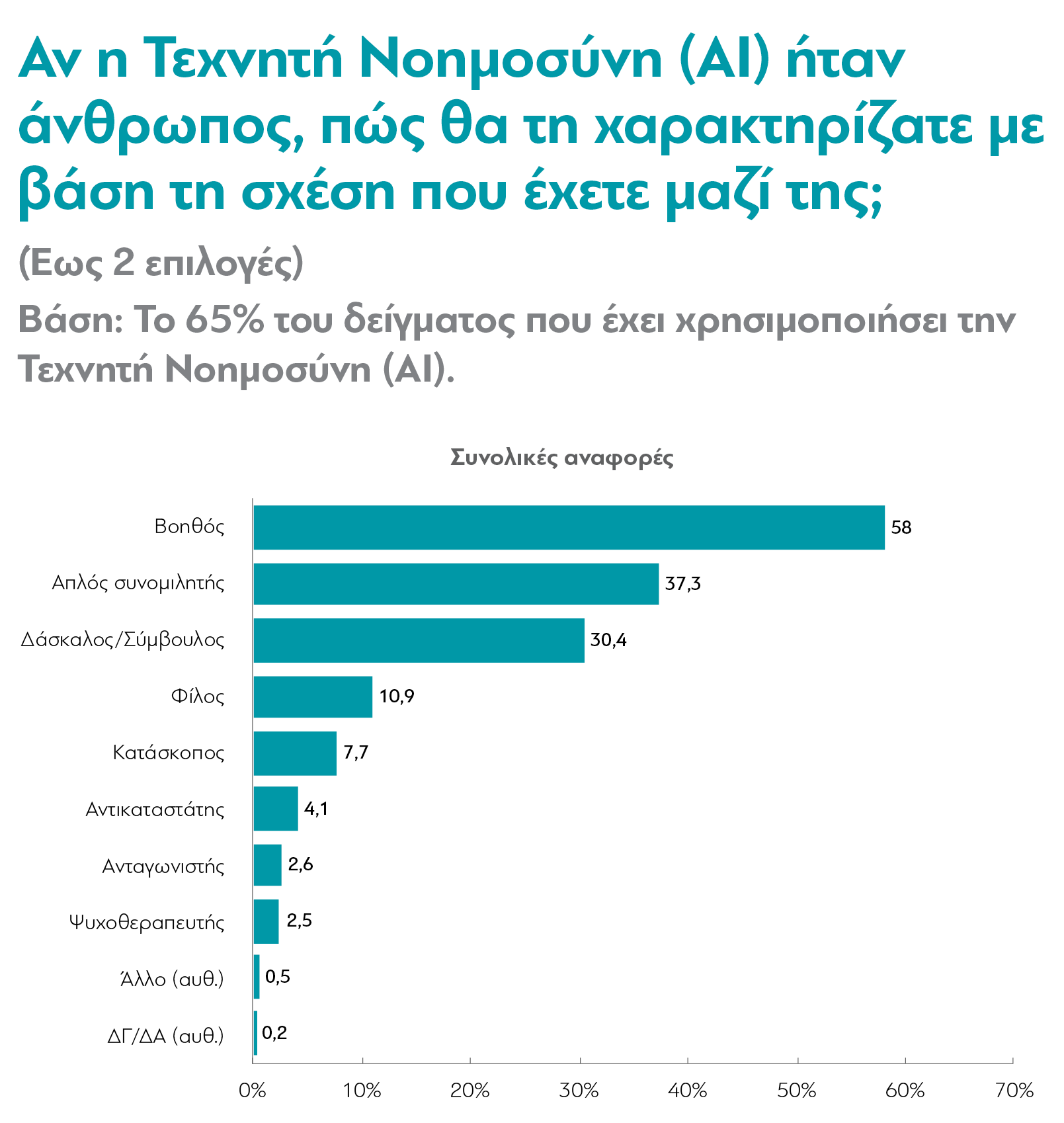

It appears, however, that despite the human-like communication, the majority of users realize that AI is a computational linguistic model and not a human being. Later in the survey, most (58%) state that they view AI as an “Assistant,” a “Simple conversational partner” (37%), or a “Teacher/Advisor” (30%)—all of which imply some degree of boundary-setting or distancing. In contrast, the more emotionally charged roles (“Friend,” “Spy,” “Replacement,” “Competitor,” “Psychotherapist”) are cited by 11% of the sample or less each.

This realism also prevails when the survey asks AI users how much they trust the results it provides—only 5% say they trust them completely, although among 17- to 24-year-olds, that percentage is double. In contrast, the vast majority respond that they trust the results somewhat (64%) or a little (28%). But what do they do, What about results they are unsure of? Most (87%) say they keep trying until they find a more convincing answer. Of these, 84% say they cross-check with other online sources, while about one in three report that they ask the AI itself for confirmation or documentation, presumably by entering additional prompts—which is also a critical skill.

However, there is no shortage of contradictions in the responses regarding the reliability of the results. Although a striking number of users say they are ready to double-check answers that don’t seem right, it’s likely that many are positively predisposed toward the results they receive before they get to the point of questioning them. In another part of the survey, 7 out of 10 users (mostly) agree that Artificial Intelligence is “impartial and objective,” half agree that “it has access to reliable sources and doesn’t make mistakes,” and 40% agree that “It has superior logic to that of humans.”

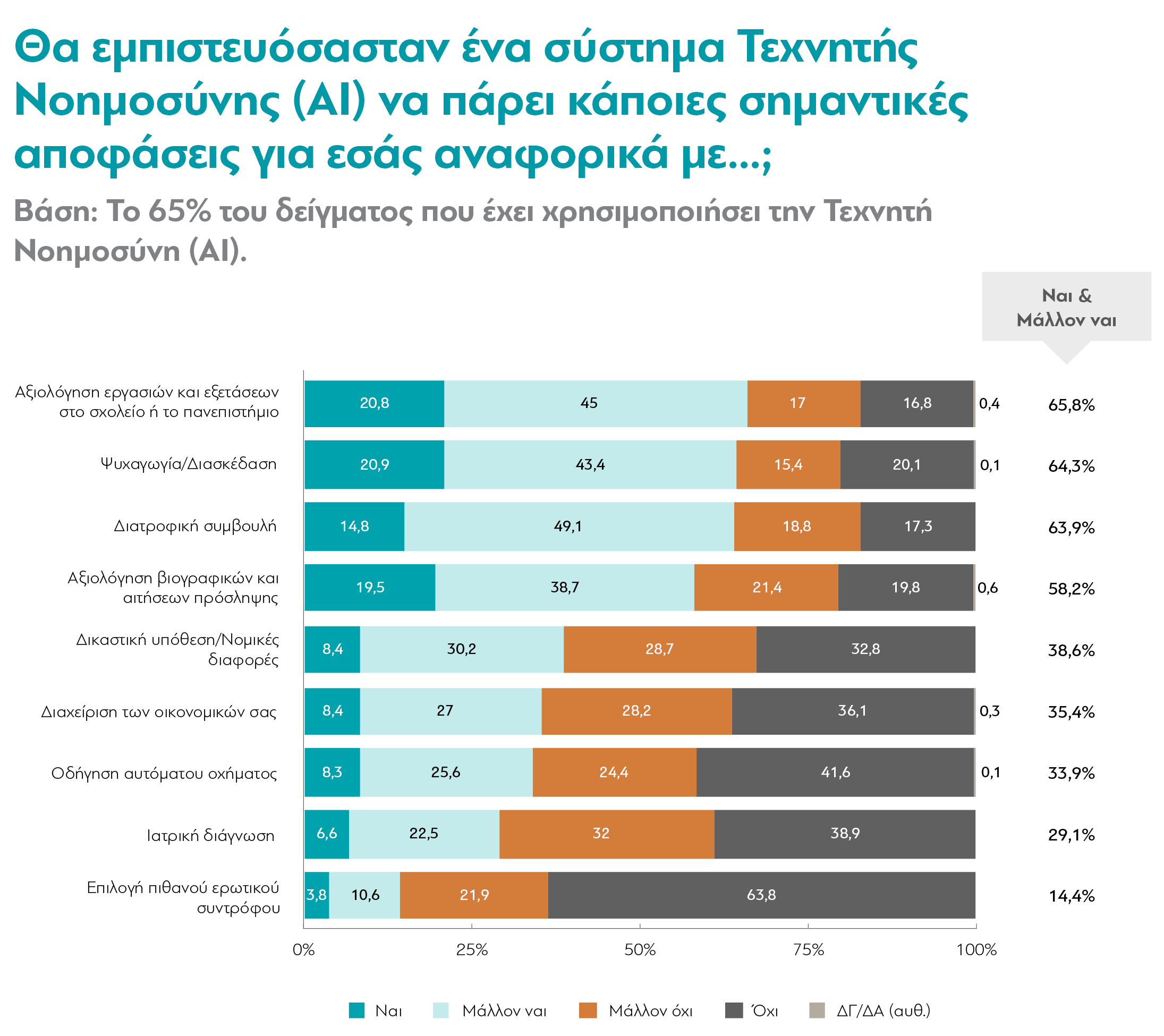

It is worth dwelling a little longer on one more question—the one asking respondents to indicate which types of decisions they would trust AI to make on their behalf. An impressive 66%, or 2 out of 3, are in favor of having school or university assignments and exams evaluated by AI. It is equally interesting that about 6 in 10 say they trust AI to evaluate their resume or a job application. Fewer, but still a significant number, of users, About 4 in 10 would also trust AI to handle a court case or provide them with legal advice.

These are decisions that are anything but trivial. In fact, many of them are linked to the so-called “algorithmic bias,” that is, the fact that the model behind AI applications tends to reproduce the biases present in the data used to train it. If, for example, a language model evaluates resumes based on data from previous human hiring decisions, then it will incorporate and reproduce any human biases that appear systematically in that data – In other words, perhaps it ends up hiring fewer women, people of color, and so on. Majority opinions also indicate a certain degree of trust regarding decisions that are likely less critical, such as those related to entertainment and leisure or dietary advice.

There is, however, another critical factor that likely plays a role in the responses to this specific question: the cost of a potential error in each of the above areas of application. Hercules Vogiatzis notes in his analysis that “the three categories with the most negative views (driving, medical diagnosis, and choosing a partner) include areas of human activity in which the cost of error is high.”

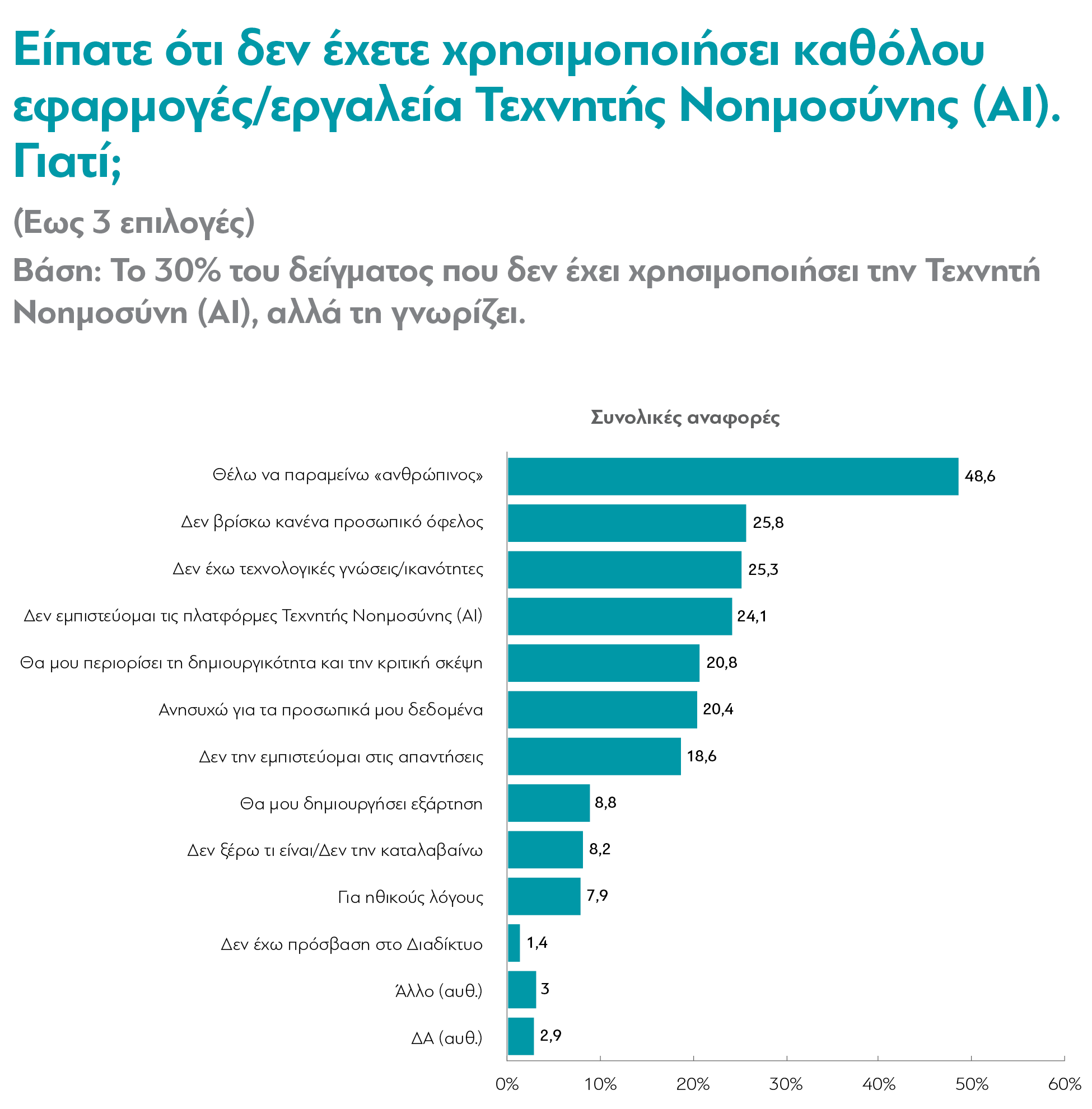

Finally, the survey poses a question exclusively to non-users, who, as noted above, are much more hesitant and cautious than those who identify as users. So why aren’t they using AI after all? The percentage of those who choose that answer is striking “Because I want to remain ‘human’”. This option is much more abstract than the others in the same question (“I lack technical knowledge,” “I’m concerned about my personal data,” etc.), but it ranks first in terms of mentions (49%), with a significant gap of nearly 23 points from the next most frequently cited reason (“I don’t see any personal benefit”). As noted in the Metron Analysis report, “It seems that a key—and perhaps fundamental—reason for skepticism toward AI is skepticism regarding the excessive intrusion of machines into human life.”.

Διαβάστε την ανάλυση του Ηρακλή Βογιατζή (.PDF)

However, Heracles Vogiatzis believes that this fear may “mask” other underlying causes, such as inequalities in access. “One interpretation of the data could suggest that the fear of the alienation of human nature […] is not always the result of insufficient knowledge or a refusal to change,” he writes. “Sometimes, it is related to limited access to technology, issues of opacity, and heightened vigilance regarding issues of rights and the political economy of technologies.”

Gender and Emotions

Having reviewed the survey results above, it is worth highlighting two additional points, which are also emphasized by the authors of the two accompanying reports. First, they identify notable differences in the attitudes of women and menApart from the fact that Women are less likely to (report that they) use AI; moreover, they tend to be more distanced from related applications. As the Metron Analysis report points out, women are more often seen as “just someone to talk to,” whereas men, on the other hand, “tend to describe a more ‘close’ relationship with AI in the role of a teacher”Similarly, women report being about 8 percentage points less optimistic than men and are also significantly less likely (48%, 13 percentage points less than men) to express interest. They are, moreover, much more likely to express fear.

In this context, and in an effort to explain these differences, Heracles Vogiatzis points out that some of the negative consequences of AI affect women more frequently: Among other things, virtual assistants/agents are presented as women, and the voices in most applications (e.g., maps) are usually female. Consequently, many AI applications reproduce gender stereotypes. Similarly, algorithmic biases perpetuate and reinforce existing gender biases.

The second point of interest concerns general feelings toward AI. Despite the intense interest and curiosity surrounding this new tool, The debate and the rapid developments surrounding artificial intelligence have also left many people feeling bewildered. “The ‘invasion’ of AI into our lives is quite recent and rapid”, according to the report Metron Analysis, “so that our feelings toward it have not yet taken on any depth, but remain at a level where this new phenomenon strikes us as interesting, since it concerns us, and at the same time we view it with suspicion, given the intense debate that has been sparked over whether it constitutes an opportunity or a threat to the future of humanity and human societies”This coexistence of skepticism and curiosity is further evident in how respondents react to the AI’s answers when they do not seem correct. “When respondents are unsure about the accuracy of the answers they receive,” notes Heracles Vogiatzis, “the overwhelming majority do not abandon or reject the technology. At this point, it seems that interest outweighs skepticism.”

But how, ultimately, can this tension between optimism about the new tool and pessimism or fear of its negative consequences be reduced? What can AI users themselves—and, by extension, organized states—do about this? “Two responses emerge, bridging the fear-hope dichotomy,” writes Heracles Vogiatzis. “The first comes with the demand for restriction and regulation, and the second with experimentation and use […]. The issue is the framework within which we collaborate with machines. If they are accountable, explain their choices, and are open to being shaped by users, then the realistic optimism of public opinion and the high level of interest seem to create fertile ground.”

Ultimately, the picture that emerges from the survey is, on the whole, that of a society that is rapidly adopting Artificial Intelligence, but is not embracing it with either uncritical enthusiasm or deep-seated fear. The most striking finding is the gap between users and non-users: The former primarily see opportunities for productivity, learning, and problem-solving, while the latter approach AI applications with skepticism and concern about the loss of their human nature. At the same time, the near-universal call for regulation indicates that the public perceives AI as a wave that also requires ethical guidelines and accountability. In the coming period, diANEOsis will revisit the topic of AI with even more material on the pressing issues highlighted by this first exploratory mapping of the perceptions of Greek men and women.

*The new major poll by diaNEOsis